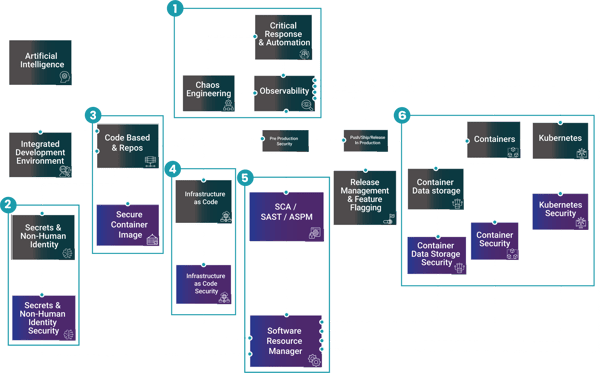

Artificial Intelligence for DevSecOps

AI is moving from experimentation to practical DevSecOps acceleration. Used well, it helps teams identify, prioritise, and remediate risk earlier without slowing delivery.

Teams can also use AI to automate triage by grouping findings, assigning ownership, and highlighting what is most likely to impact production.

Artificial Intelligence

Problems

High Development Costs.

Solution

Utilises compute power to generate responses based on inputted requests or actions.

Artificial Intelligence

Developing software is getting more expensive as teams juggle faster delivery cycles, security requirements, and increasing operational complexity. Many organisations lack the time and specialist skills to keep up.

The solution AI solves this by using trained models and scalable compute to assist with coding, testing, documentation, and security tasks generating suggestions and responses from prompts and context so teams can ship faster, reduce rework, and improve quality without adding headcount.

In the pipeline, AI can review code changes for risky patterns, suggest secure by default configurations, and help generate policy as code or IaC guardrails.

In security operations, it can correlate findings from scanners, CI/CD logs, runtime signals, and cloud controls to reduce noise and surface the few issues that truly block a release.

For developers, copilots and chat based workflows can turn security guidance into actionable fixes explaining impact, proposing a patch, and creating the right ticket context.

The key is governance: define where AI is allowed to act, keep humans in the loop for approvals, protect sensitive code and secrets, and measure outcomes like false positive reduction and mean time to remediate. Done right, AI becomes a force multiplier across build, deploy, and run.

Mirantis K0rdent addition to Artificial Intelligence for DevSecOps

AI Infrastructure & Platform Engineering with Mirantis k0rdent

Beyond using AI inside the SDLC, organisations also need a governed platform for deploying and operating AI workloads at scale.

AI projects often stall after the pilot phase because the infrastructure behind them is fragmented across clusters, clouds, and operating models. Platform teams need a repeatable way to provision secure, governed environments for AI-powered applications and machine-learning workloads without rebuilding the platform for every use case.

Mirantis k0rdent brings a Kubernetes-native, declarative approach to that challenge, helping teams compose AI-ready internal developer platforms with reusable templates, centralised lifecycle management, and golden paths for security and scale. The outcome is faster time to value for AI initiatives, less platform toil, and a more consistent way to run modern workloads across cloud, on-prem, and edge.

All industries have Generative AI initiatives across various parts of their business & it’s openly predicted that the US market alone is set for a 40.6% CAGR rate from $25B to $280B over the next few years. The US only representing 40% of the overall Generative AI market. For Nuaware, we’re seeing this in several parts of their DevSecOps chain – GenAI, AIOps, AgenticAI for your LLM’s, AI powered Infrastructure as Code & of course, AIOps – all of which are projects that cover everyone from Developer to Engineering, Compliance and Operations teams.

![]() Financial Services/FinTech

Financial Services/FinTech![]() Insurance

Insurance![]() Healthcare

Healthcare![]() Public Sector

Public Sector![]() Telecommunications

Telecommunications![]() Energy

Energy![]() Retail/Ecommerce

Retail/Ecommerce![]() Technology/SaaS/ISVs

Technology/SaaS/ISVs![]() Transportation/Logistics

Transportation/Logistics

Roles

Who cares about AI for DevSecOps?

![]() Platform Engineering Manager

Platform Engineering Manager![]() Developer Platform Owner

Developer Platform Owner![]() DevOps/DevSecOps Lead

DevOps/DevSecOps Lead![]() Application Security (AppSec) Lead

Application Security (AppSec) Lead![]() Product Security

Product Security![]() Security Engineering Lead

Security Engineering Lead![]() Cloud Security Architect

Cloud Security Architect![]() CISO/Head of Security

CISO/Head of Security![]() SRE/Operations Lead

SRE/Operations Lead![]() Head of Engineering

Head of Engineering![]() Engineering Managers

Engineering Managers

Key Discovery Questions

Answering these questions helps uncover risks and align your strategy with best practices in AI for DevSecOps.

|

1 |

Are your developers using AI as part of their everyday processes? |

|

2 |

Which security tools generate the most “noise,” and how do you currently prioritise what actually matters? |

|

3 |

Do developers have an easy way to get “how do I fix this?” guidance in context (IDE, PR, ticket), or does it rely on specialists? |

|

4 |

How are you provisioning and governing AI/ML environments today, and is that process repeatable across teams? |

|

5 |

Can your platform team offer self-service AI environments with guardrails, or does every new AI project require bespoke setup? |

|

6 |

How are you managing AI workloads across cloud, on-prem, and edge without increasing operational toil or locking teams into one infrastructure choice? |

Continue Your Journey

Contact Us

Connect with our global team

As technology continues to reshape industries and deliver meaningful change in individuals’ lives, we are evolving our business and brand as a global IT services leader.